The video is a separate, longer talk—not a recording of the event.

Slide 1

Slide 2

"Architecture-as-Code: From Vibe Coding to Enterprise-Grade AI-Assisted Development"

OPENING: The Seduction and the Hangover

Good evening everyone. I'm Rob Vugts, a Technical Architect and associate within the Equal Experts network. I've spent over 30 years working as a technical architect and software engineer in the banking sector — ING, Credit Suisse, UBS — and for the past year, as a self-employed freelancer working under the banner of AI-chitect, I've been dedicating pretty much all of my time to exploring whether AI can truly handle enterprise-grade engineering.

So, let me start with a confession. The first time I tried vibe coding — that term Andrej Karpathy coined for just "giving in to the vibes" and letting AI write your code — it felt like magic. You type a natural language prompt, hit enter, and code pours onto the screen faster than you can read it. Functions, tests, the feature works. You feel like a 10x developer.

And then... about 20 minutes in... the hangover hits.

The AI starts hallucinating libraries that don't exist. It forgets the code it wrote three prompts ago. It introduces subtle bugs that break your production build. You spend the next two hours debugging the "magic" code you generated in two minutes.

Slide 3

THE PROBLEM: Why Naive Vibe Coding Doesn't Fit the Enterprise

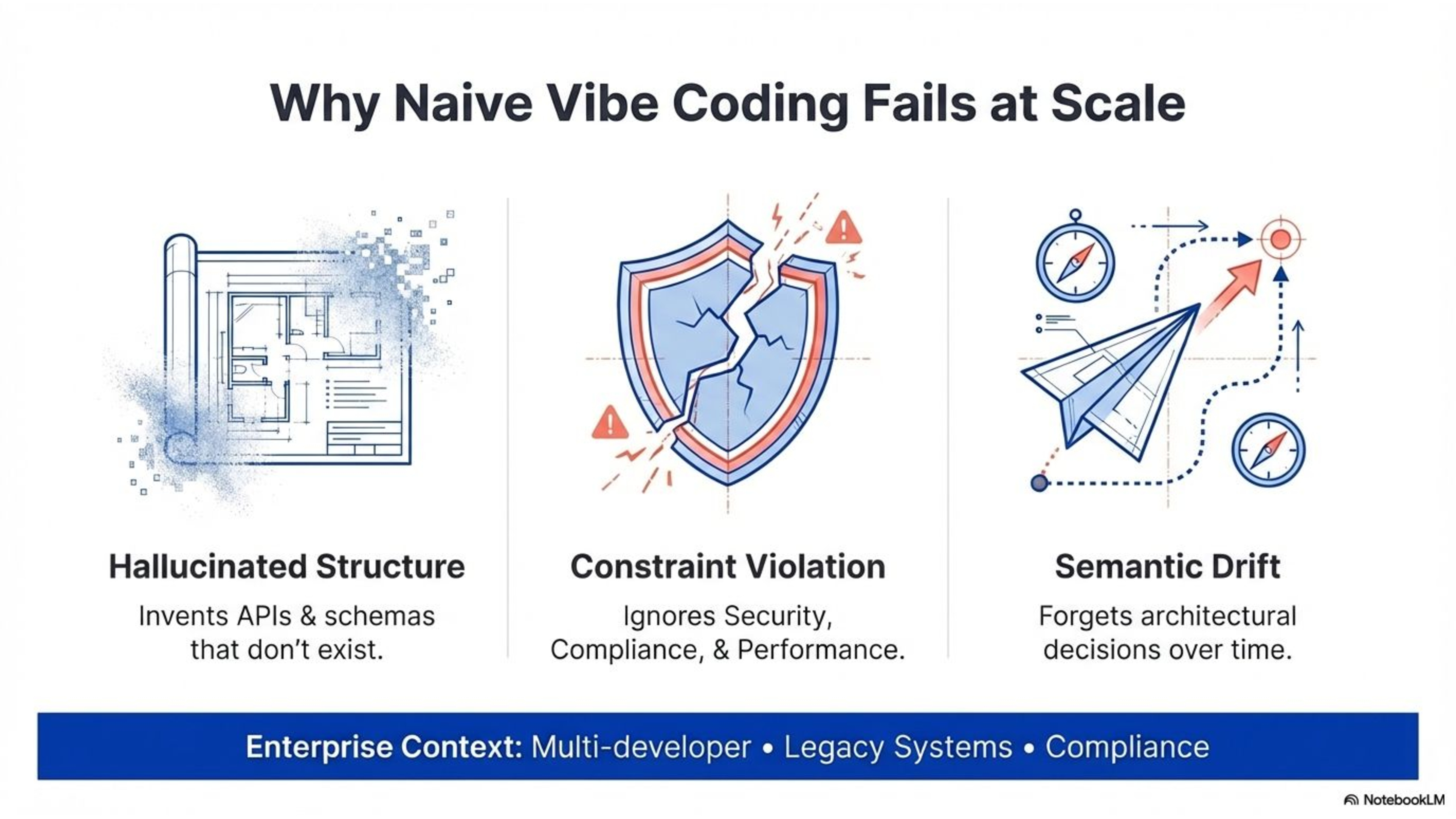

Now, for a weekend side project or a quick prototype, that's annoying but manageable. But here's the thing — most of us don't work on greenfield side projects. We work in enterprises. And in the enterprise, the problems compound catastrophically.

Think about what's different. You have multiple developers — sometimes dozens — working on the same codebase. You're rarely starting from scratch; you're extending and maintaining systems that have been around for years. There's no concept of "architecture-first" in vibe coding. And critically, there's typically zero consideration for non-functional requirements — performance, security, scalability, compliance.

Three predictable failures happen when you let AI loose without guardrails:

First — hallucinated structure. The AI invents API shapes, database schemas, and component boundaries based on its training data, not your actual system.

Second — constraint violation. NFRs like security and compliance are ignored because the AI has no persistent awareness of them.

Third — semantic drift. Over extended conversations, the AI gradually forgets earlier decisions. Each session creates a slightly different interpretation of your system.

So, a lot of people conclude: "AI is a toy. It's good for scripts, but not for real engineering." But they're wrong. The problem isn't the AI. The problem is the workflow.

Slide 4

THE PATH: A Maturity Ladder for AI-Assisted Development

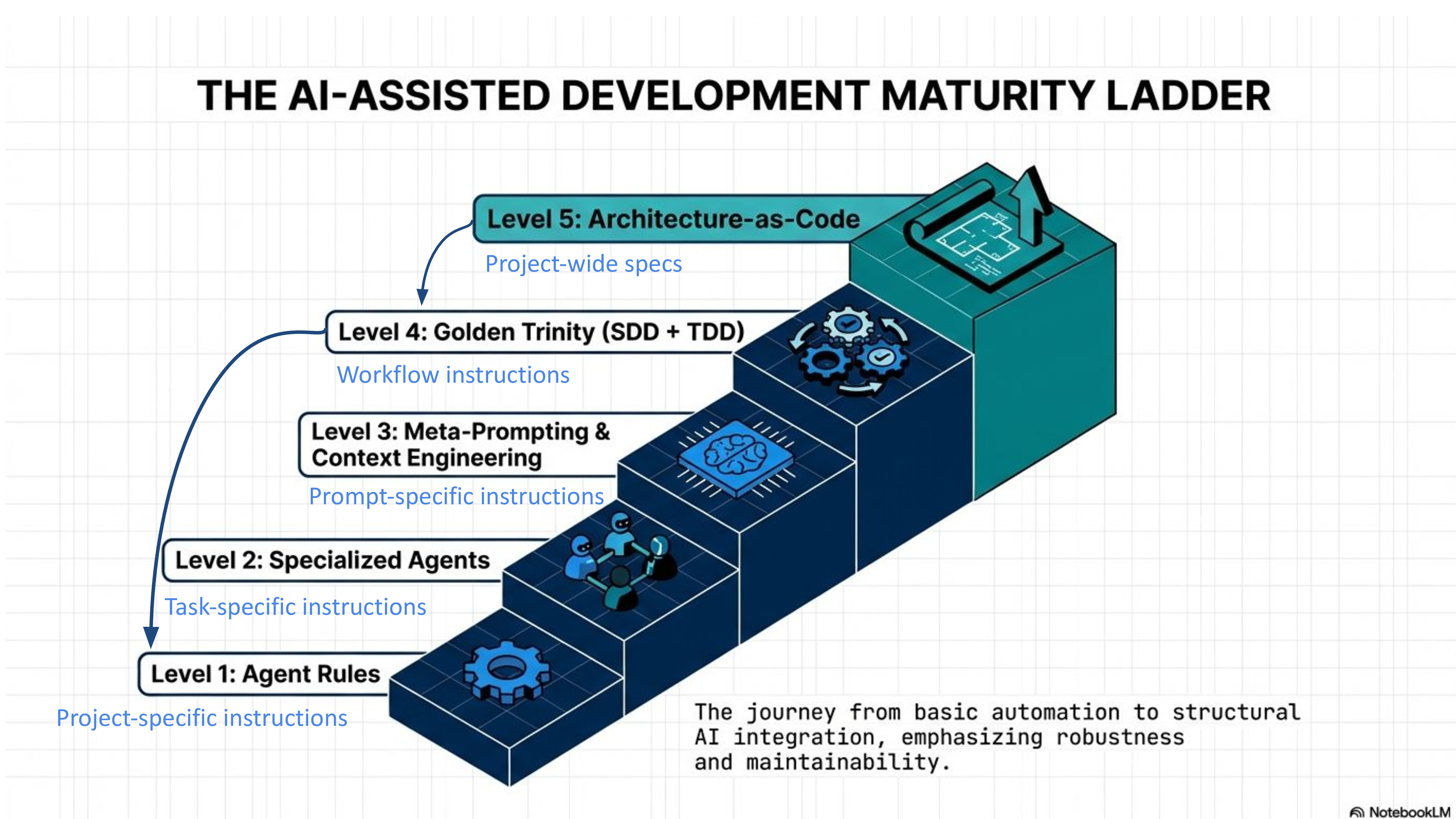

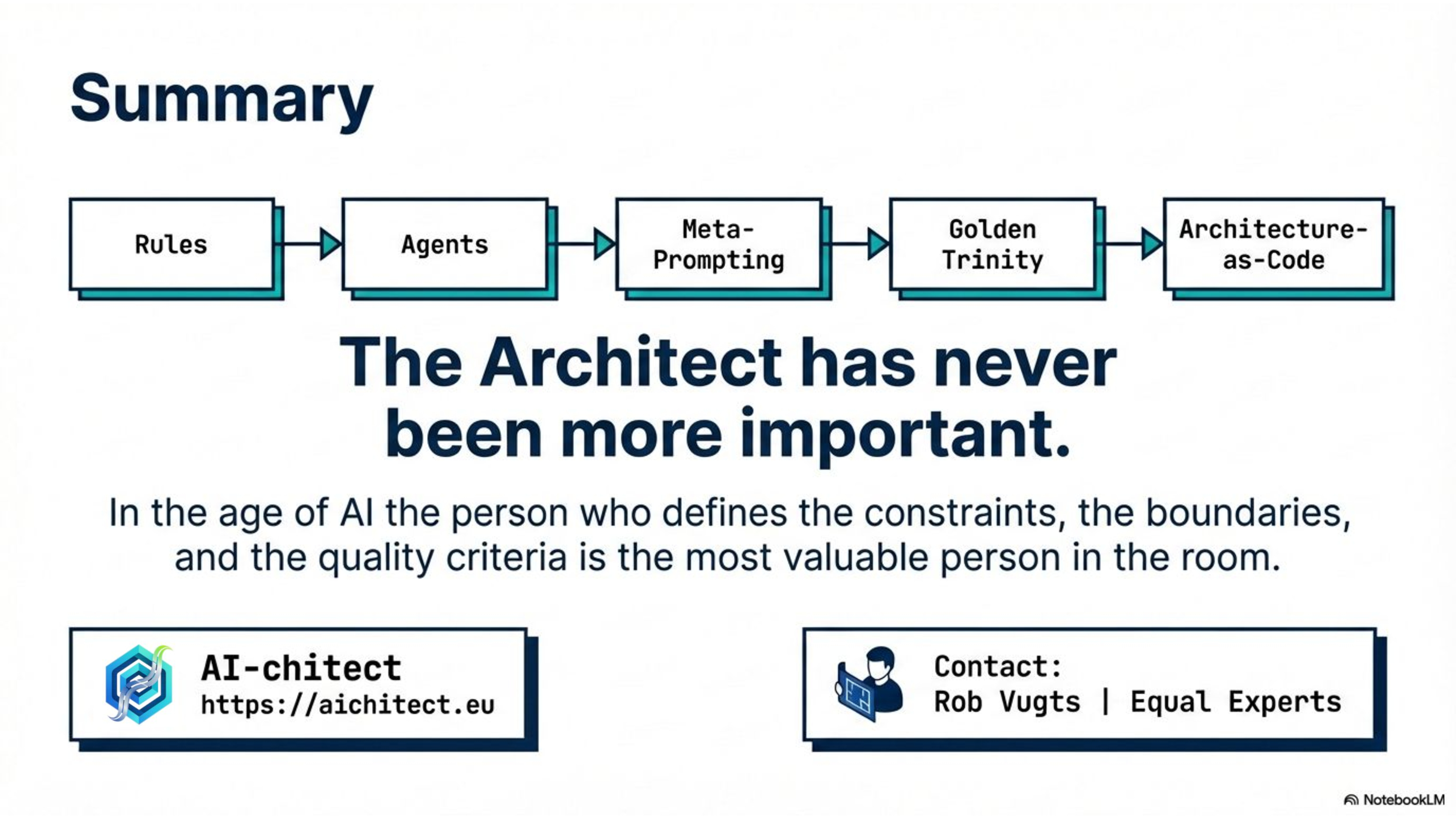

What I want to share with you tonight is a maturity path — a ladder you can climb to go from naive vibe coding to something I call Architecture-as-Code. Each step adds a layer of discipline that makes AI-assisted development safer, more predictable, and genuinely suitable for enterprise work.

The levels are: Agent Rules, Specialized Agents, Meta-Prompting, the Golden Trinity of SDD and TDD, and finally, Architecture-as-Code. Let me walk you through each.

Slide 5

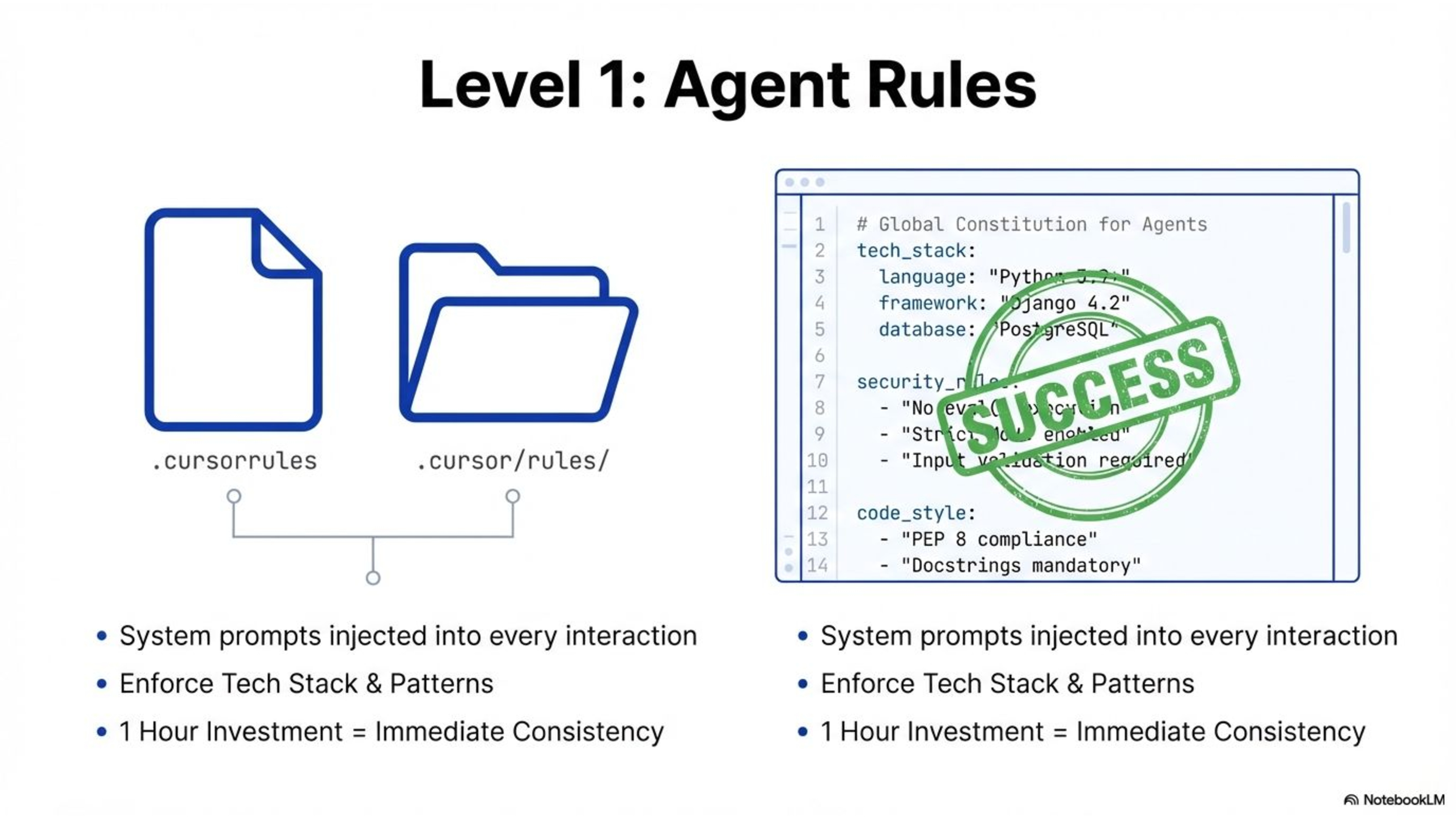

LEVEL 1: Agent Rules — Teaching the AI Your Standards

The simplest improvement you can make — today, this evening — is to set up agent rules. In an AI-native IDE like Cursor, you create a .cursorrules file and a .cursor/rules/ directory. These are system prompts that get injected into every AI interaction.

You define your tech stack, your coding conventions, your forbidden patterns. "Always use TypeScript strict mode." "Never use eval()." "Follow PEP 8." "Use parameterized queries — always."

You can make these rules trigger contextually — React rules activate only when editing .tsx files, testing rules activate when you mention tests. The AI stops guessing your preferences and starts following your standards automatically.

It's a small investment — maybe an hour of work — and it immediately eliminates the most common source of friction: having to constantly correct the AI's style and choices.

Slide 6

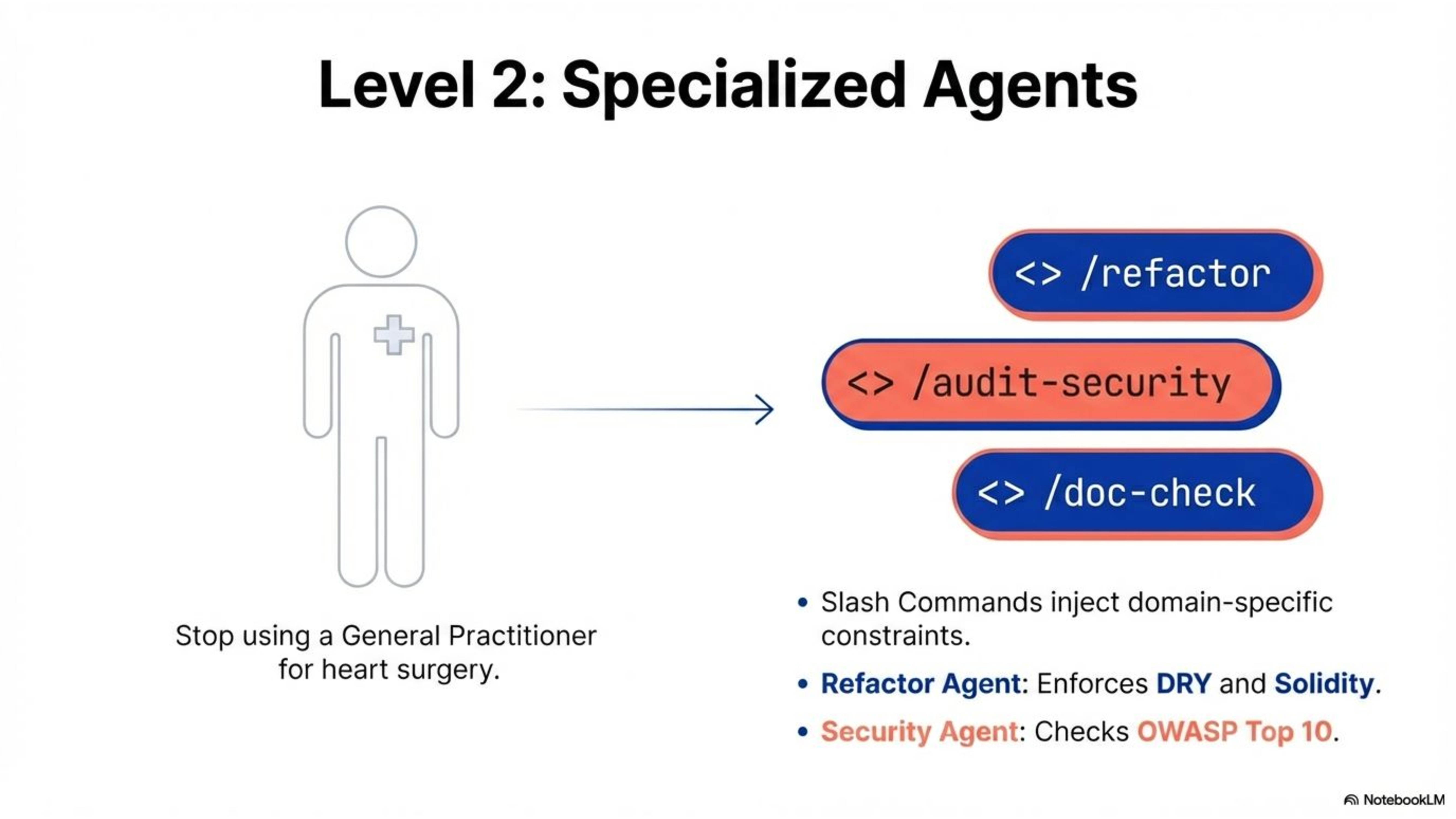

LEVEL 2: Specialized Agents — A Team of Experts

Next, you stop treating the AI as one generic tool and start building a team of specialists. In Cursor, you create slash commands (or skills)— simple markdown files that inject domain-specific system prompts.

A /refactor command transforms the AI into a refactoring specialist that enforces DRY principles, function length limits, and security patterns — without changing functionality. A /security-audit command makes it scan for OWASP (Open Web Application Security Project) Top 10 vulnerabilities. A /code-review command turns it into a thorough reviewer that checks against your architectural rules.

You can also build commands for documentation standardization — ensuring every module has proper docstrings, every API endpoint is documented, every architectural decision is recorded.

The key insight is that a general-purpose LLM is like a general practitioner. You wouldn't ask a GP to perform heart surgery. These specialized agents give you a team of surgeons, each expert in their domain.

Slide 7

LEVEL 3: Meta-Prompting and Context Engineering

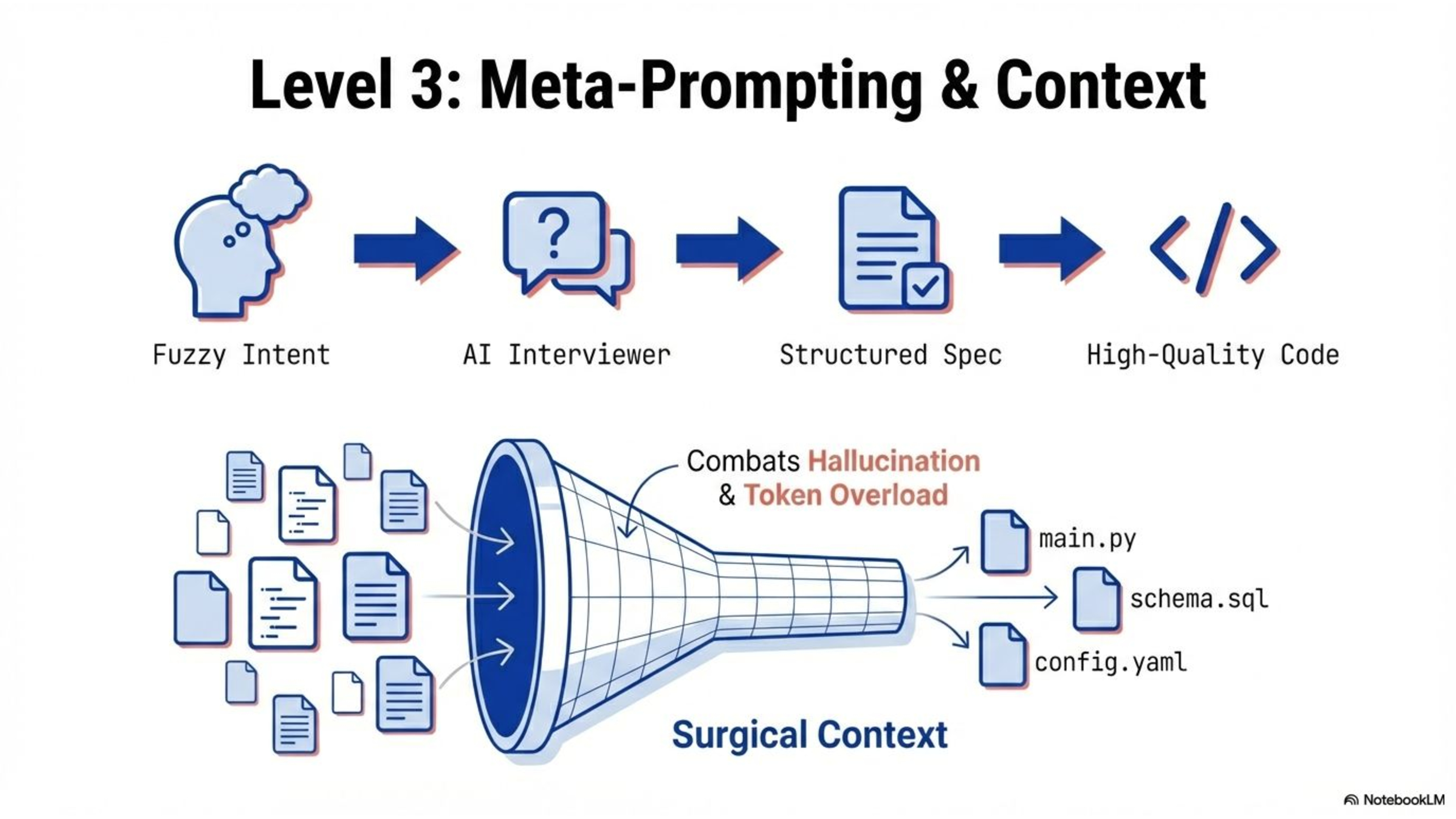

Level three is about the quality of conversation with your AI. Two techniques make a massive difference.

First, meta-prompting — using AI to write the perfect prompt for another AI. Instead of sending a fuzzy request like "build a user dashboard," you use a /create-prompt command that makes the AI interview you. It asks about edge cases, constraints, error states, accessibility requirements — things you hadn't even considered. The result is a detailed, structured prompt that dramatically improves output quality.

Second, context engineering. Every token you send to the AI costs money and eats into its attention. You need to be surgical about what context you provide. Feed the AI only the files it needs — on a need-to-know basis. Start fresh conversations at natural breakpoints. Think of it as clearing a whiteboard — if you keep writing over old drawings, eventually it becomes illegible scribbles.

These techniques directly combat hallucination and information loss — two of the biggest problems in AI-assisted development.

Slide 8

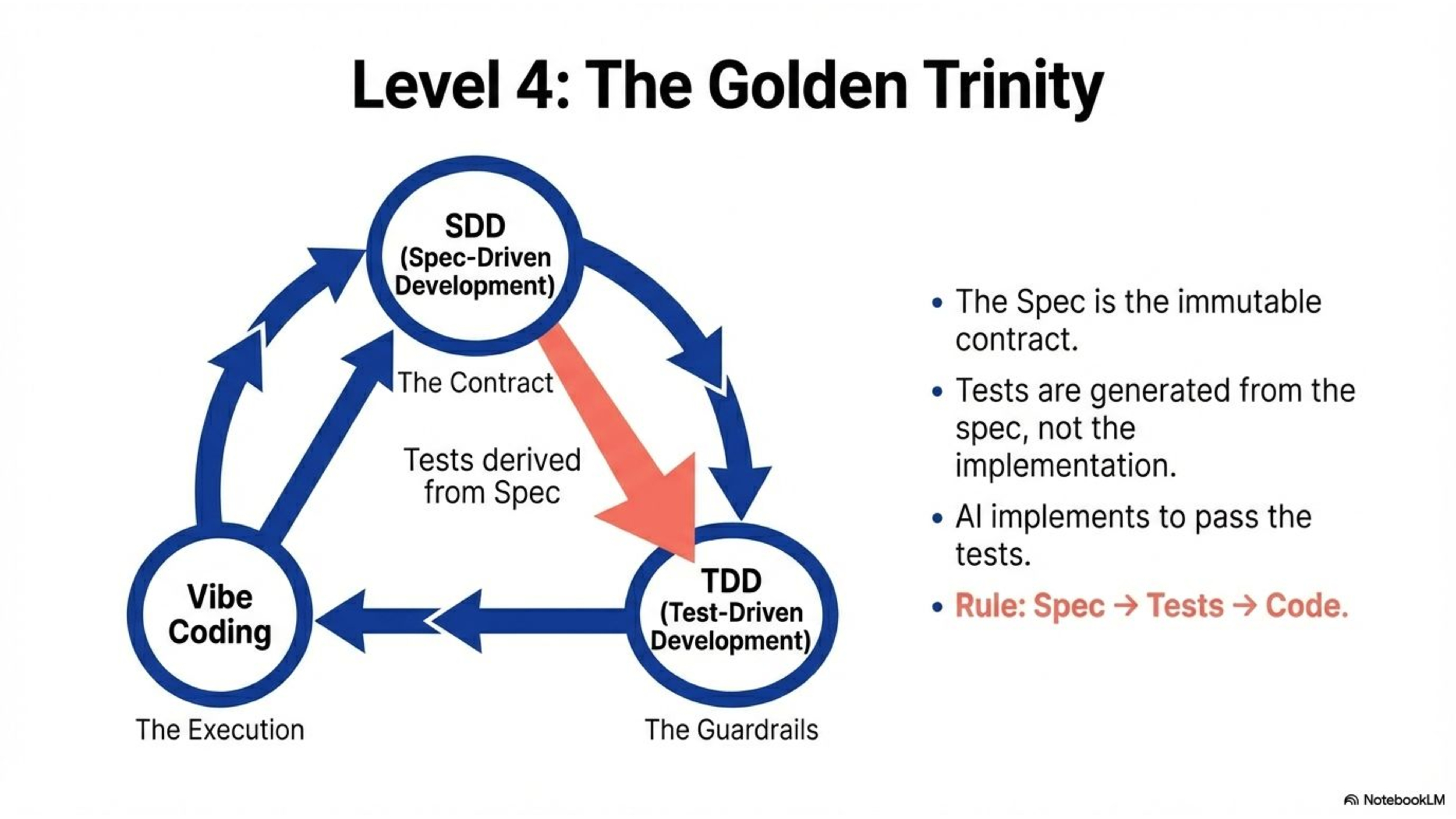

LEVEL 4: The Golden Trinity — Spec-Driven and Test-Driven Development

Now we get to the methodology that truly changes the game. I call it the Golden Trinity: Spec-Driven Development, then Test-Driven Development, then — and only then — vibe coding.

Spec-Driven Development means you create a spec.md file before any code exists. This is your contract — it defines the goal, the constraints, the data structures, the interfaces, and critically, the edge cases. This spec is immutable. The AI implements against it; it does not get to change it.

Test-Driven Development means you generate your test suite from the spec, not from the implementation. This is crucial — if the AI writes both the code and the tests, it's marking its own homework. By writing tests first, from the spec, you create an independent verification layer. Hallucination control.

Only then do you vibe code. The AI writes implementation to make the tests pass. It can run at full speed because you've removed the risk of structural failure. The spec is the map, the tests are the guardrails, and the AI lays bricks at superhuman speed within those constraints.

Slide 9

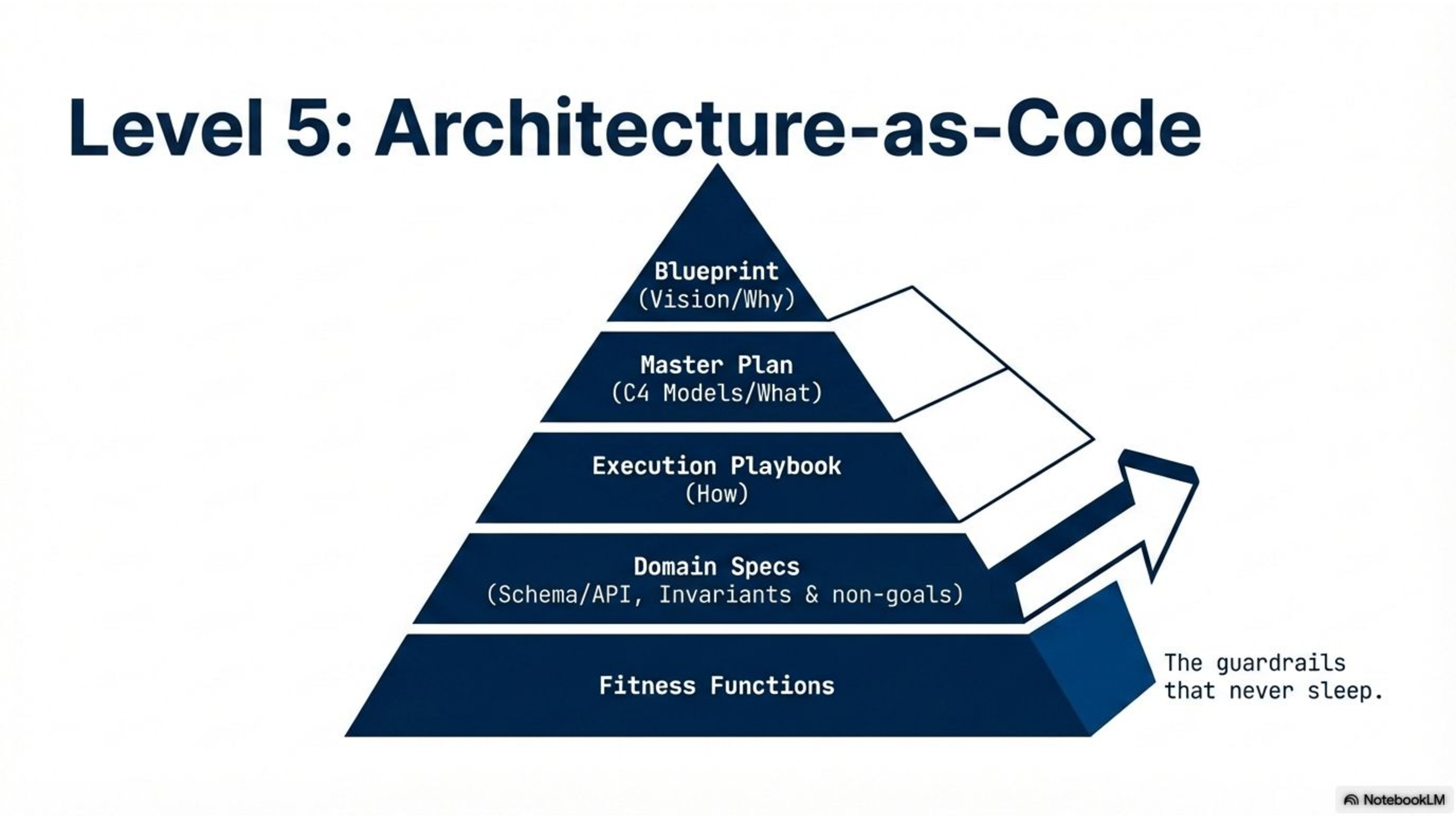

LEVEL 5: Architecture-as-Code — The Full System

The Golden Trinity is powerful for individual features. But at enterprise scale, you need something more. You need Architecture-as-Code.

Architecture-as-Code treats your architectural decisions as executable, version-controlled, automatically validated code — not static diagrams that drift from reality. Just as Infrastructure-as-Code transformed how we provision servers, Architecture-as-Code transforms how we define, enforce, and evolve software architecture.

It's built on a document hierarchy. At the top, you have a Blueprint — your vision, philosophy, and technical constraints. The "why." Then a Master Plan — your C4 models, ADRs, NFRs, technology stack. The "what." Then an Execution Playbook — readiness gates, phased execution plans, the AI execution protocol. The "how."

But here's what makes it truly powerful: you also define Non-Goals — explicit, permanent exclusions. Things the AI must never implement, suggest, or plan for. You define Domain Specifications — your glossary, invariants, schemas, API contracts. These are frozen. The AI implements against them; it doesn't get to reinvent them.

And at the base, you have Fitness Functions — automated tests that verify architectural characteristics, not just functional behavior. Unit tests ask "does this feature work?" Fitness functions ask "does this code belong?"

A fitness function might verify that every data model has tenant isolation, that the API layer never imports from the worker layer, that no UPDATE operations touch an immutable table, that your SQLAlchemy models match your schema.sql. These run on every commit, in your CI/CD pipeline, and they block violations before they merge. They are the guardrails that never sleep.

Slide 10

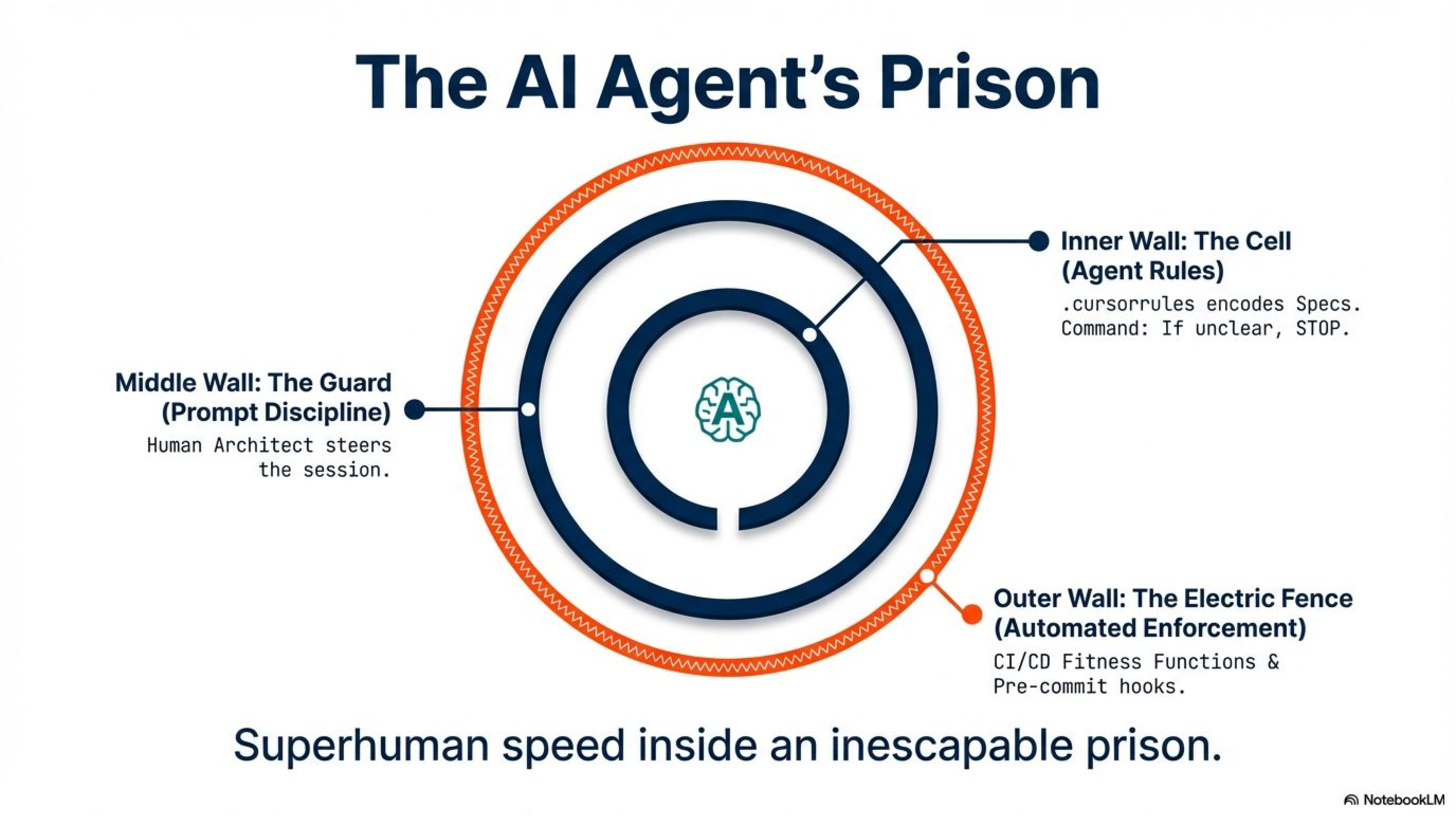

HOW THE ARCHITECTURE IS ENFORCED — THE AI AGENT'S PRISON

So we have all these architecture artifacts — the Blueprint, the specs, the invariants, the non-goals. The question is: how do we actually enforce them? The answer is: we build a prison. Three walls, and the AI agent cannot escape any of them.

The inner wall is the cell: Agent Rules. Your .cursorrules file defines the AI's reality from the moment it wakes up. Before it writes a single line of code, it already knows what it cannot do. Specs are immutable — you may not invent new fields, collections, or state transitions. Invariants are absolute. TDD is mandatory — no implementation without tests. Non-goals are forbidden. In one of my projects, the rules file explicitly states: "If a spec is missing or unclear, STOP and ask. Do not guess. Do not implement." The agent is born into constraints. It never knows freedom.

The middle wall is the guard: Prompt Discipline. Every implementation prompt reinforces the rules. You remind the agent which specs to read, which governance docs apply, which schema is the source of truth. The human architect is the warden — steering each session, keeping the AI aligned with your intent.

The outer wall is the electric fence: Automated Enforcement. Even if something slips past the first two walls, pre-commit hooks and CI/CD fitness functions catch it before code merges. Layer boundary violations, schema drift, missing tenant isolation, forbidden patterns — all caught automatically. No escape. No parole.

We give the AI superhuman speed — inside a prison it cannot break out of. That's what makes the speed safe.

Slide 11

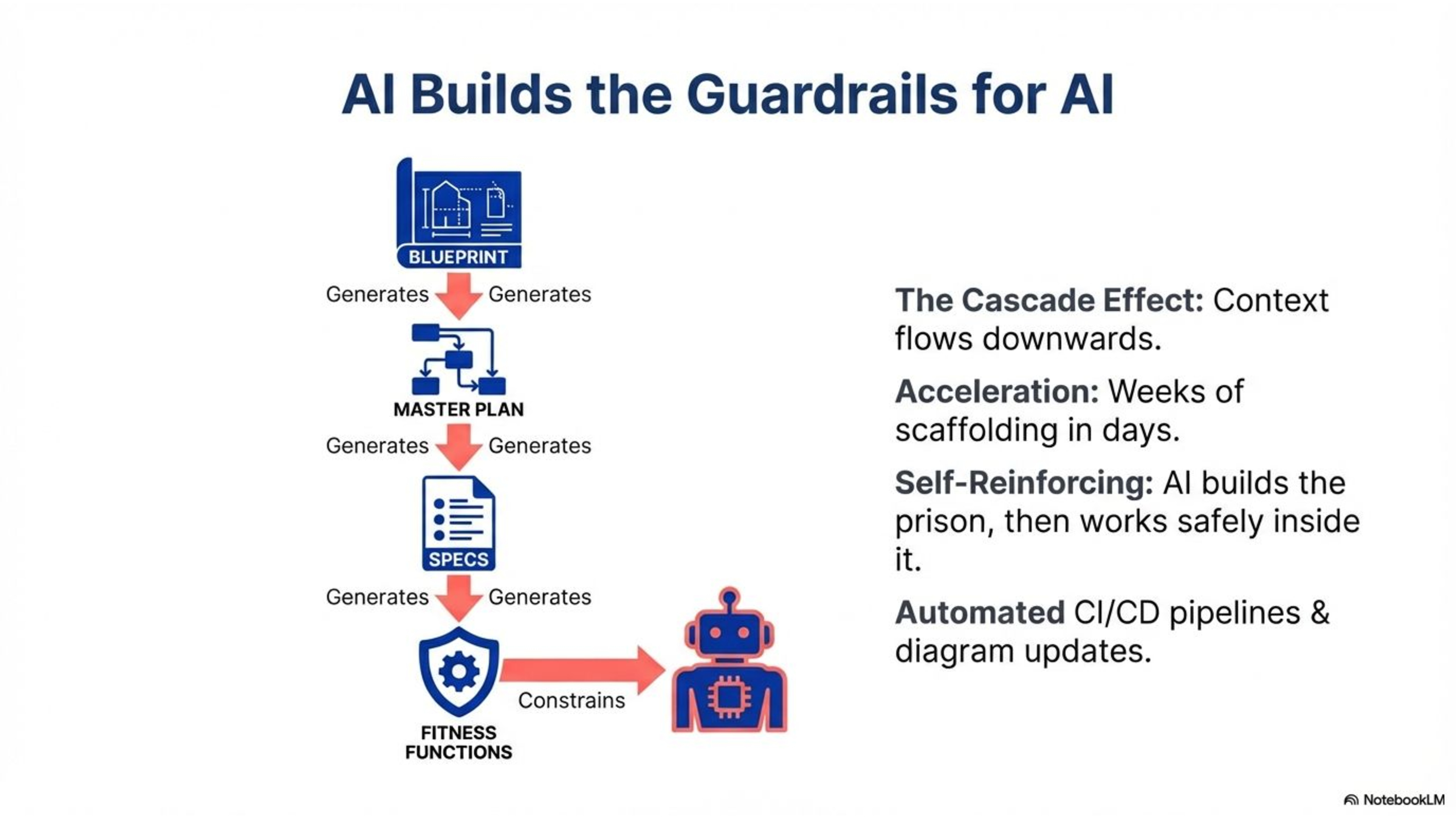

THE MAGIC: AI Builds the Guardrails That Keep AI in Check

Now, I know what you're thinking. "Rob, this is a massive upfront investment. Blueprint, Master Plan, Execution Playbook, domain specs, fitness functions — that could take weeks before we write a single line of feature code!"

And here's the beautiful thing: you use AI to build all of these artifacts.

The document hierarchy is designed to cascade. The Blueprint provides context to generate the Master Plan. The Master Plan provides context to generate the Execution Playbook. The domain specs provide context to generate the fitness functions. Each layer builds on the foundation of the layer above.

So you sit down with Claude or Gemini and say: "Here's my Blueprint. Help me generate the Master Plan — the C4 models, the ADR templates, the NFR targets." Then: "Here are my invariants and schema. Generate pytest fitness functions for each invariant." Then: "Based on these specifications, generate the cursor rules that will constrain AI agents during implementation."

What looks like weeks of work becomes days. AI builds the guardrails that keep AI in check. And you also use AI to build advanced CI/CD pipelines that perform code quality checks, security scans, run fitness functions, and even automatically update architecture diagrams so they never go stale.

This is the meta-level application of vibe coding. The Architect uses AI at superhuman speed to create the scaffolding; then the scaffolding ensures the AI implements correctly during feature development.

Slide 12

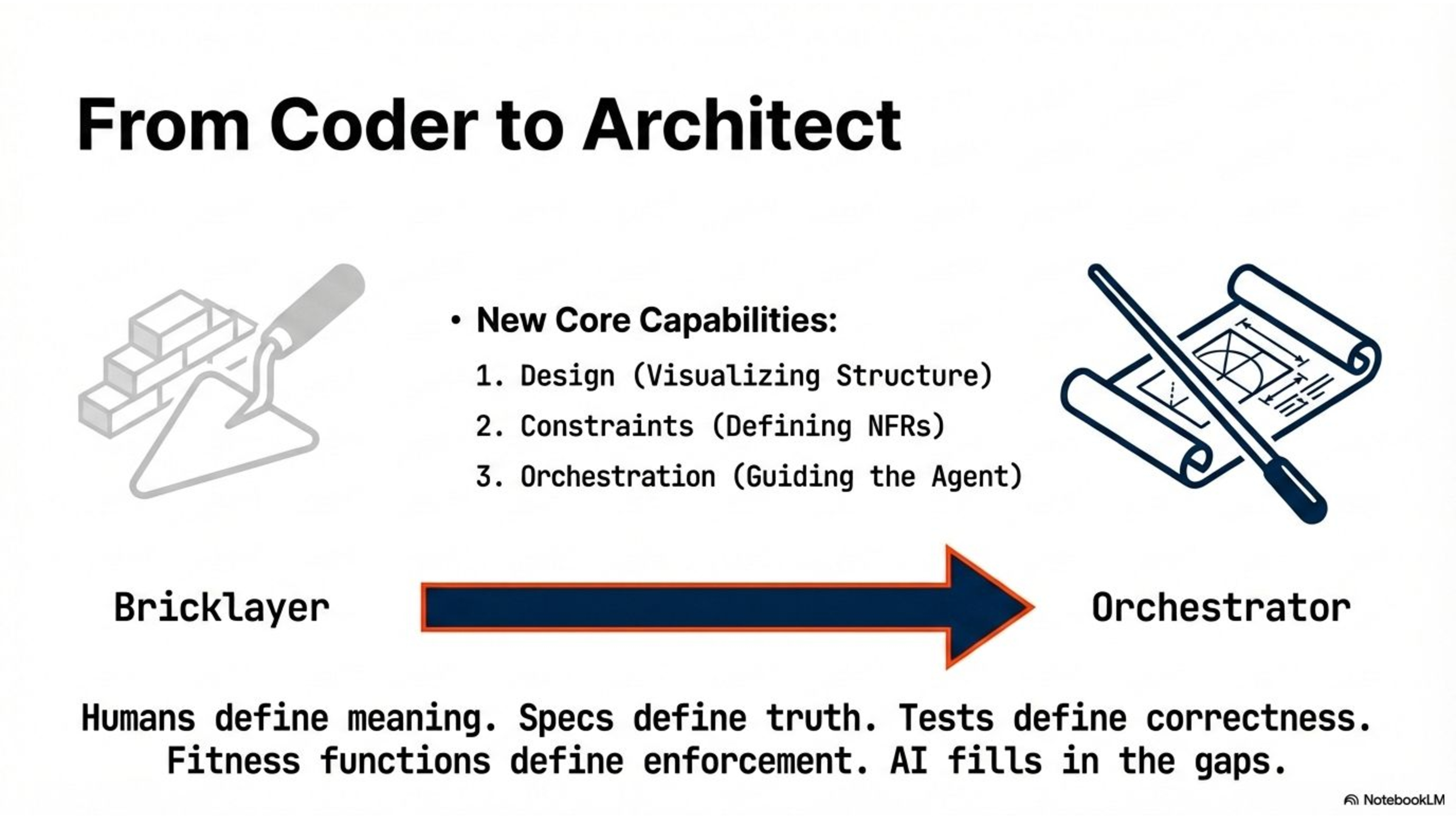

THE SHIFT: From Coder to Architect and Orchestrator

This brings us to the fundamental shift in what it means to be a developer — and an architect — in the age of AI.

We've spent 30 years teaching developers to be bricklayers. We prided ourselves on syntax knowledge, memorizing libraries, typing characters into a text file. That era is ending.

Your value is now defined by three capabilities. Design — can you visualize the system structure? Constraints — can you define the non-functional requirements? Orchestration — can you guide an intelligent agent to build exactly what you envisioned?

Developers become architects and orchestrators. You define the Design. You set the Constraints. You encode your intent as fitness functions. The CI/CD pipeline enforces your decisions automatically. The AI does the typing — within guardrails that never sleep.

Humans define meaning. Specs define truth. Tests define correctness. Fitness functions define enforcement. AI fills in the mechanical gaps. If this order is respected, AI-assisted development becomes a force multiplier instead of a liability.

Slide 13

CLOSING

So, to summarize the journey: start with agent rules — it takes an hour. Add specialized agents for refactoring, security, and code review. Adopt meta-prompting for better context. Implement the Golden Trinity of spec-driven and test-driven development. And for enterprise-scale work, adopt Architecture-as-Code with fitness functions that enforce your architecture automatically.

The role of the architect has never been more important. In a world where AI can generate code at superhuman speed, the person who defines the constraints, the boundaries, and the quality criteria — that person is the most valuable person in the room.

Thank you.